The missing half of digital health: Exploring pathways for AI to scale in LMIC health systems

by Anirooddha Mukherjee, Boijayanti Sarker and Dr. Puneet Khanduja

by Anirooddha Mukherjee, Boijayanti Sarker and Dr. Puneet Khanduja Mar 31, 2026

Mar 31, 2026 7 min

7 min

Over the past decade, health ministries in many low- and moderate-income countries (LMICs) have experimented with a new generation of AI tools in everyday healthcare settings. In Pakistan, India, and parts of Sub-Saharan Africa, AI systems are being tested to help screen patients for tuberculosis. Hospitals in Kenya and Bangladesh have begun testing algorithms that flag pregnancies at higher risk of complications. In Nigeria, primary health centers have piloted digital tools that offer […]

Over the past decade, health ministries in many low- and moderate-income countries (LMICs) have experimented with a new generation of AI tools in everyday healthcare settings. In Pakistan, India, and parts of Sub-Saharan Africa, AI systems are being tested to help screen patients for tuberculosis. Hospitals in Kenya and Bangladesh have begun testing algorithms that flag pregnancies at higher risk of complications. In Nigeria, primary health centers have piloted digital tools that offer guidance during clinical decisions. Many of these experiments have produced promising results, which prove that the technologies work. Yet very few have moved beyond the pilot stage, a fate similar to digital health tools.

The challenge has been the system rather than the technology, a reality the global health community has been slow to acknowledge. This phenomenon, described in the digital health literature as pilotitis, is widespread across LMIC health systems. Pilots are plentiful, but integration into existing health systems is rare. Many innovations show strong results under short-term donor funding, only to collapse once that support ends. The technology itself may remain viable, but for lasting impact, server space continuity, re-skilling of staff, and financial planning are important pre-requisites.

This gap between promising pilots and real-world scale is not unique to health systems. Across industries, most AI initiatives struggle to move beyond proof of concept. Industry estimates indicate that nearly a third of generative AI projects may be abandoned after the pilot stage due to unclear value or implementation barriers.

The uncomfortable truth is that most of the scaling frameworks applied in LMICs were designed for high-income health systems with different infrastructure, institutional maturity, and health worker profiles. These frameworks often fall short in LMIC settings, which highlights the need to develop approaches customized to their own realities.

Why the standard playbook fails

In practice, the dominant approach to AI in health has been to build technology outside the systems it is meant to serve. They are built generally in high income countries, tested in donor-funded pilots, and validated under controlled conditions. They are then handed to a public health system that had no meaningful involvement in their development. This model has consistently struggled at the scaling stage, and the reasons are well-documented. The IBM Watson for Oncology is a pertinent example. Trained on data from a US cancer center and deployed across hospitals in India, Thailand, and South Korea, it collapsed in practice because its recommendations were clinically incompatible with local health systems that had no role in shaping it.

Several systemic constraints limit the ability of AI tools to scale within LMIC health systems. The first constraint is connectivity. Many AI tools assume reliable broadband and stable digital infrastructure. These conditions are often absent in rural areas, where much of the disease burden in LMICs is concentrated. One example is the real-world deployment of an artificial intelligence tool for chest X-rays. Recurring incompatibilities between the AI software and existing X-ray equipment were documented due to differences in image formats and acquisition protocols.

The second constraint is the digital maturity of health workers. It receives the least investment and has the most optimistic assumptions. Frontline workers are often highly skilled at navigating community health and adapting clinical practice under resource pressure. However, their experience with digital tools is recent, uneven, and layered on top of workflows that have not changed significantly since the paper register. An AI system that requires new data entry habits or needs health workers to interpret model outputs without sustained support will not be used consistently, regardless of performance.

The third constraint is data. LMIC health information systems are fragmented, mostly built to serve government reporting cycles rather than clinical decision-making. An AI tool trained on data from Nairobi will not perform with the same accuracy in rural Kenya, let alone in a different country entirely.

The fourth, and most structurally dangerous, constraint is funding design. Most pilots operate within short-term donor funding cycles. Scaling a digital health intervention into the national infrastructure requires sustained investment for many years. When funding falls short, the intervention stalls before it can reach scale.

Workable pathways

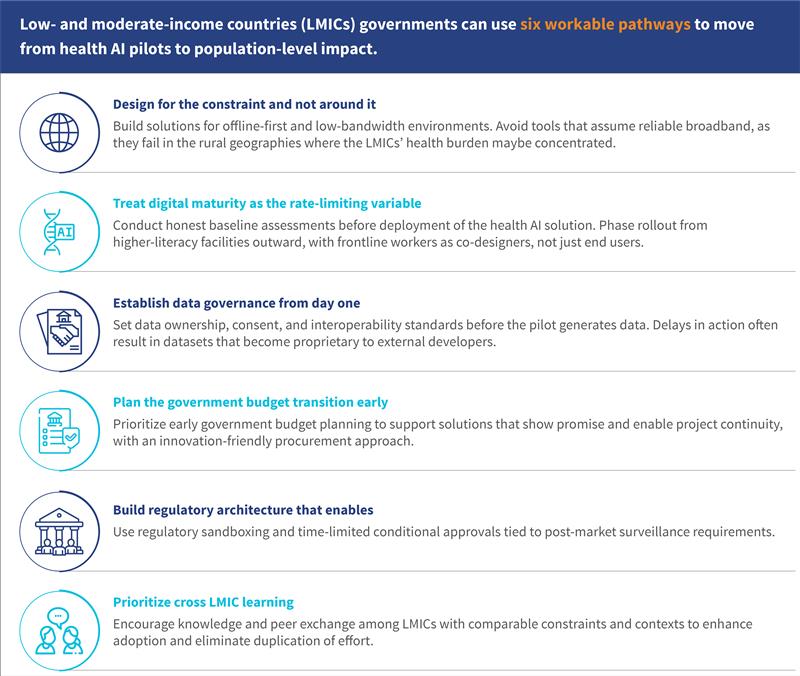

The first pathway is to design specifically for the constraints that LMICs face. AI tools must function offline, operate under low-bandwidth conditions, and work across a wide range of devices. For example, computational power (commonly referred to as compute) is a binding constraint that rarely features in pilot design. Cloud-based AI inference requires reliable internet and ongoing server costs that few LMICs can sustain at scale. High-income health systems often treat the compute infrastructure, such as stable cloud access, sufficient processing power, and manageable API costs, as background conditions.

Systems must actively plan their AI tools for use in low-resource settings. One option is the use of Small Language Models, or “small AI”, which is lightweight, locally deployable models that run on low-cost devices without cloud connectivity. Large-scale AI systems may deepen existing inequities by perpetuating bias due to underrepresented LMIC populations in their training data. Small AI offers a more viable and contextually appropriate alternative. The TB detection tools with the greatest durability at scale ran on laptops without internet access and integrated with mobile X-ray units in remote communities. These include CAD4TB deployments in Pakistan and Bangladesh. Features, such as voice interfaces for workers with limited text literacy and outputs aligned with decisions made by community health workers, were found to be contextually superior.

Task-specific small language models (SLMs) trained on locally representative clinical data and deployable on low-cost hardware represent one of the most underleveraged opportunities in Health AI for LMICs. Unlike general-purpose models that depend on cloud connectivity and commercial APIs, sovereign SLMs for narrow use cases like TB X-ray interpretation or high-risk pregnancy detection can outperform larger models on the tasks that matter, while keeping data governance and long-term costs firmly in government hands.

Based on this agenda, MSC (MicroSave Consulting) cofounded the Alliance for Inclusive AI with BFA Global and Caribou. We are committed to developing practical “small AI” solutions that expand opportunity for underserved communities across the Global South.

The second pathway prioritizes the digital readiness of health workers, way before deployment begins. This begins with an honest assessment of baseline digital capacity before implementation, led by health ministries. This would entail a phased rollout starting in facilities with higher digital literacy, generating evidence and frontline champions, then expanding to the periphery with those champions as peer trainers. Thus, frontline workers would actively help design tools.

The third pathway is data governance from the outset. Governments that launch AI pilots must establish data ownership, consent frameworks, and interoperability standards before deployment. These foundations must be in place before the pilot has generated a dataset that becomes proprietary to an external developer. India’s Ayushman Bharat Digital Mission, even as it continues to mature operationally, represents a serious effort to build the data architecture that AI-enabled health systems require. The lesson for other LMICs is to invest in this infrastructure before the AI tools arrive.

The fourth pathway is to plan the government financing transition early. Every pilot should include a clear and costed pathway for integration into public budgets well before donor funding expires. Governments must engage finance ministries early, show value through the cost per disability-adjusted life year (DALY) averted, and negotiate innovation-friendly procurement frameworks, especially at provincial or state levels. When governments plan this transition early, AI-enabled tools offer the potential to reduce cost per patient reached and strengthen the evidence base for more informed healthcare budget allocations at national and sub-national levels.

The fifth pathway is a regulatory architecture that enables responsible and timely deployment. Many LMIC ministries lack comprehensive AI-specific regulatory frameworks. In such cases, waiting for a fully developed framework can stall useful innovations. Regulatory sandboxing, time-limited conditional approvals tied to post-market surveillance, and regional regulatory harmonization frameworks adapted from pharmaceutical precedents offer pragmatic mechanisms to introduce AI tools and maintain safeguards.

The sixth pathway, and the most underused, is LMIC-to-LMIC learning. Contexts that share infrastructure constraints, workforce realities, and regulatory gaps are better positioned to learn from each other than from high-income systems where these conditions do not exist. Structured knowledge exchange, supported by regional bodies, offers a more grounded and transferable basis for scaling than imported playbooks ever could.

Figure 1: Six pathways for governments to move from health AI pilots to population-level impact

The way forward

The global health community has invested considerable effort to show that AI tools can operate in low-resource settings. Much of the evidence is promising, and technology itself is not the main obstacle.

The challenge lies in the systems into which these tools must be introduced, as health systems are complex institutions and not controlled environments. Scaling a technology requires infrastructure to support it, workers who can use it confidently, data systems that can provide reliable inputs, governance structures that can regulate it, and public budgets that can sustain it. In many LMIC health systems, these foundations must be deliberately built.

This is a familiar lesson. Expanding coverage without governance produced the paradox we see in health insurance systems across the Global South. Pilots without pathways will produce the same paradox in AI health systems.

Success will therefore depend less on the number of promising pilot projects and more on the conditions that enable scaling. Governments that invest early in infrastructure, workforce readiness, data systems, and financing arrangements will be better positioned to move beyond experimentation.

Written by

Anirooddha Mukherjee

Manager

Boijayanti Sarker

Assistant Manager

Leave comments